Not All AI Is Generative. And That's the Point.

Most AI projects do not fail because the model is bad. They fail because the wrong kind of intelligence was applied to the problem.

Most AI Projects Do Not Fail Because the Model Is Bad

They fail because the wrong kind of intelligence was applied to the problem.

Right now, everything is being treated like a generative AI problem. Draft with a model. Reason with a model. Decide with a model. Enforce with a model. Act with a model.

That is lazy thinking.

AI is not a single thing. It is a set of very different tools with very different failure modes. In healthcare especially, using the wrong one does not just create bad output. It creates quiet risk that looks impressive until it matters.

The real skill is not knowing how to build AI.

It is knowing which kind of intelligence belongs where.

The Generative AI Trap

Generative AI feels powerful because it is flexible.

It talks well. It demos well. It adapts. It sounds confident. It gives the impression of understanding.

That makes it seductive.

But flexibility is not reliability. And in healthcare, reliability beats creativity almost every time.

There is a reason regulated industries rely on constraints, thresholds, and guardrails. Systems that touch real decisions need predictability before they need expressiveness.

Generative AI is exceptional at synthesis.

It is dangerous at enforcement.

When teams treat every problem as a generative one, they end up with systems that feel smart and behave unpredictably. These systems do not usually fail loudly. They fail by eroding trust.

Once trust is gone, adoption follows.

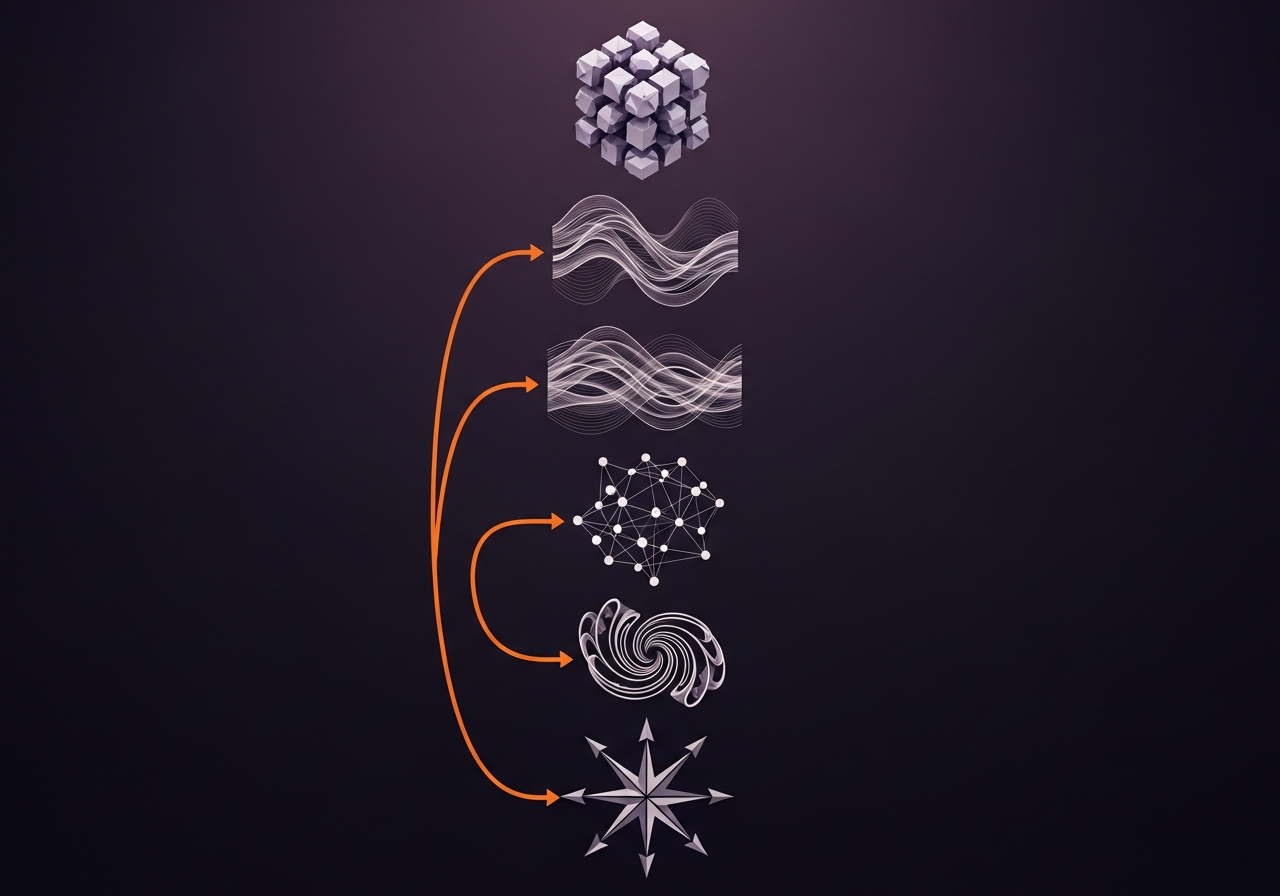

The Five Kinds of Intelligence People Keep Mixing Up

Most of what people call AI in practice falls into five categories. These are not academic definitions. They are decision styles.

Knowing which one you are using matters more than how advanced it is.

1. Rules Based Systems

When certainty matters more than creativity

Rules based systems are boring. That is why they work.

If the logic is known, repeatable, and non-negotiable, you should not be asking a model to reason about it.

Examples in healthcare:

Capital approval thresholds

Compliance checks

Eligibility criteria

Policy enforcement

Guardrails around financial or clinical risk

These systems answer questions like: Is this allowed? Does this violate a constraint? Can this proceed?

Rules do not hallucinate. They enforce.

If your system is deciding whether something is allowed, generative AI is almost always the wrong choice.

2. Statistical and Predictive Models

When probability beats explanation

Predictive models are about likelihood, not narrative.

They answer questions like: How likely is failure? What risk is increasing? Where should we look first?

Examples:

Equipment failure prediction

Demand forecasting

Readmission risk

Vendor risk scoring

Capacity planning

These models do not explain themselves well. They estimate. That is fine.

If your problem is about patterns and likelihoods, reach for statistical intelligence first.

3. Retrieval Augmented Generation

When the answer exists but no one can find it

RAG is not intelligence. It is disciplined retrieval plus synthesis.

It works when the information already exists, is scattered across systems, people waste time searching, and the cost of being slightly wrong is low.

Examples:

Contract interpretation

Policy lookup

Vendor documentation

Internal knowledge bases

Regulatory guidance navigation

RAG systems do not know things. They fetch and summarize.

If your system needs to cite where an answer came from, RAG beats pure generation every time.

4. Generative Systems

When synthesis is the goal, not certainty

This is where generative AI belongs.

Generative systems are excellent when the task is drafting, summarizing, exploring scenarios, comparing options, or supporting human judgment.

Examples:

Capital planning scenarios

First pass analysis

Executive briefings

Narrative synthesis of complex inputs

Decision support, not decision making

The key phrase is support. Generative AI is a co-pilot, not an authority.

If the answer is allowed to vary and a human owns the outcome, generative AI is appropriate. If not, stop.

5. Agentic Systems

When intelligence is allowed to act

Agentic systems are not a new model type. They are a control pattern.

An agent is any system that receives or sets a goal, chooses actions, executes across tools or workflows, observes outcomes, and iterates.

This is where most teams get into trouble.

Rules, predictive models, RAG, and generative systems answer questions.

Agentic systems do things.

That difference is everything.

The risk with agentic systems is not hallucination. It is mis-scoped autonomy.

Where Agentic Systems Actually Belong

Agentic systems work when four conditions are true:

The action space is constrained. The agent can only do a limited, well-defined set of things.

Guardrails exist upstream. Rules define what the agent is allowed to attempt.

Consequences are reversible. A human can unwind mistakes.

Accountability is explicit. It is clear who owns the outcome.

If any of these are false, do not deploy an agent.

Good agentic use cases in healthcare:

Capital sourcing coordination — An agent monitors asset risk signals, assembles sourcing packets, and routes them to stakeholders. It does not decide. It prepares.

RFP orchestration — An agent tracks milestones, enforces deadlines, nudges vendors, and assembles submissions. Judgment remains human.

Contract lifecycle hygiene — An agent monitors renewal dates, flags risk clauses, and escalates anomalies. No contract changes without review.

Data hygiene workflows — An agent detects inconsistencies, proposes normalization, and queues corrections for approval.

The agent proposes. Humans approve.

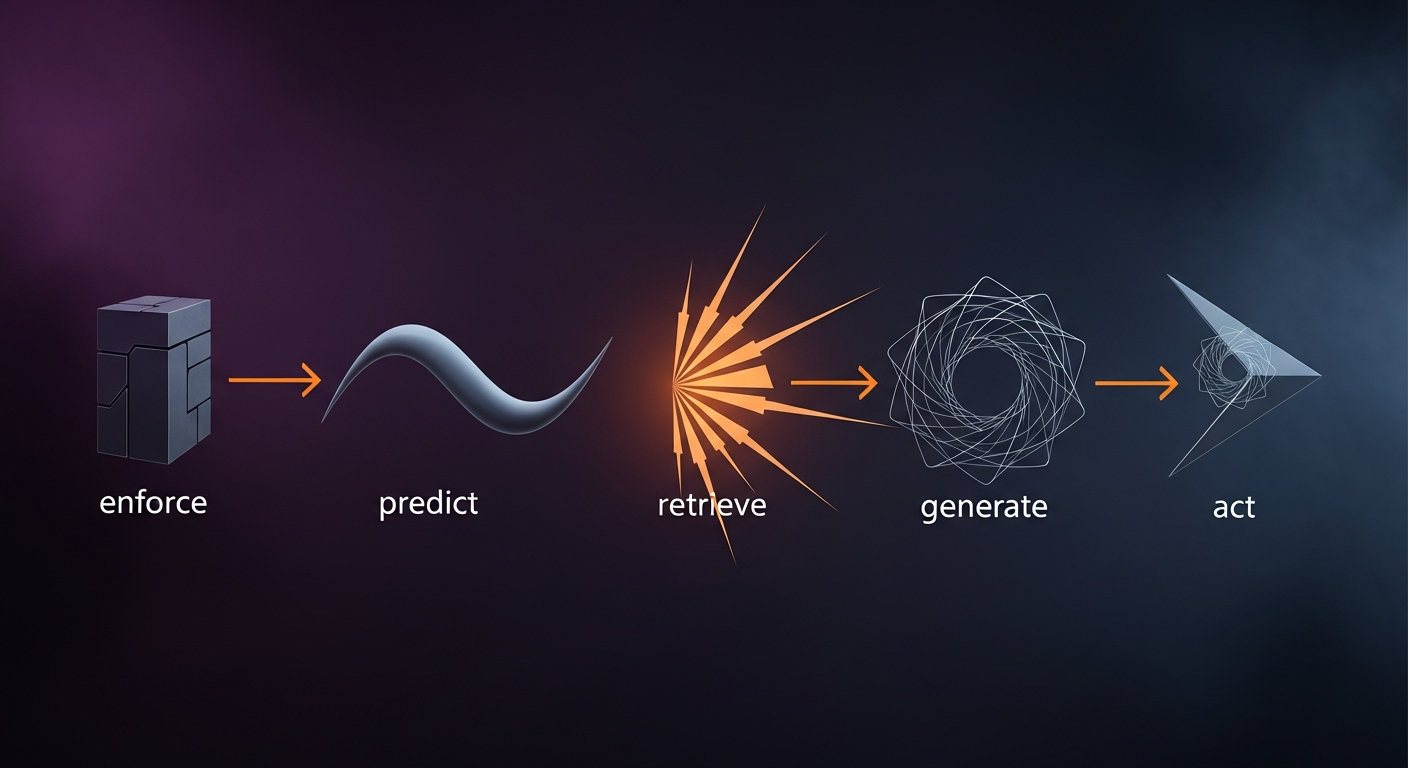

The Care + Code Mental Model

Here is the version that actually holds up:

Enforce → Predict → Retrieve → Generate → Act

With one rule that matters more than all the others:

Action is always downstream of constraint.

Rules constrain agents.

Models inform agents.

Retrieval grounds agents.

Generation assists agents.

Agents never replace governance.

The Part That Actually Matters

The most effective AI systems in healthcare are boring on purpose.

They enforce where they must. They estimate where they can. They retrieve what already exists. They generate where humans still decide. They act only within strict bounds.

That is not conservative.

That is professional.

Care + Code exists to help teams make these distinctions before they ship something they cannot defend.

Not all AI is generative. And that is still the point.